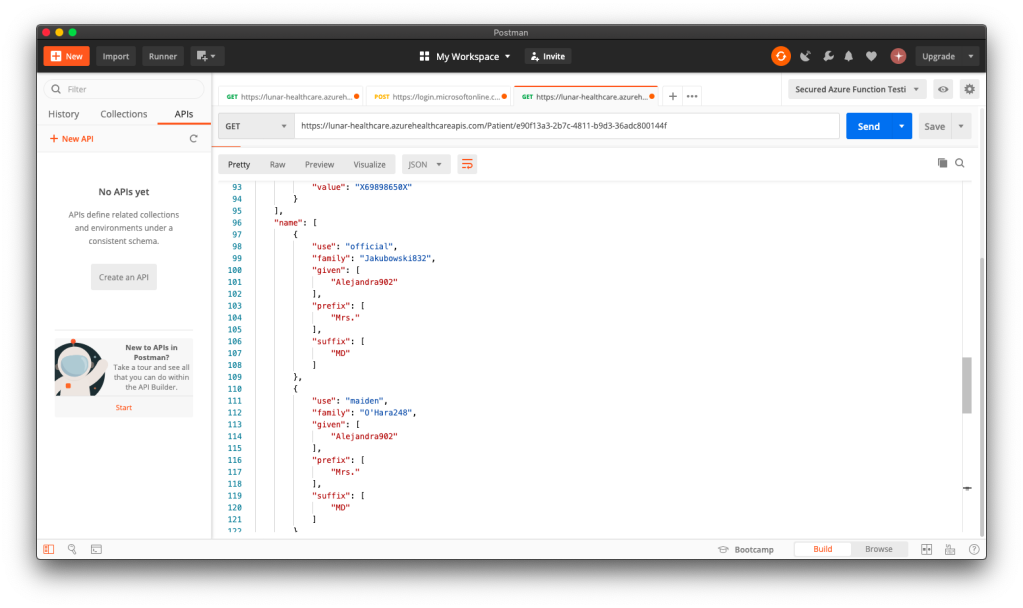

For the fourth consecutive year, I have renewed my Azure Developer Associate certification. It is a valuable discipline that keeps my knowledge of the Azure ecosystem current and sharp. The performance report I received this year was particularly insightful, highlighting both my strengths in security fundamentals and the expected gaps in platform-specific nuances, given my recent work in AWS.

Objectives

Renewing Azure certification is a hallmark of a professional craftsman because it sharpens our tools, knowing our trade. For a junior or mid-level engineer, this path of structured learning and certification is the non-negotiable foundation of a solid career. It is the path I walked myself. It builds the grammar of our trade.

However, for a senior engineer, for an architect, the game has changed. The world is now saturated with competent craftsmen who know the grammar. In the age of AI-assisted coding and brutal corporate “flattening,” simply knowing the tools is no longer a defensible position. It has become table stakes.

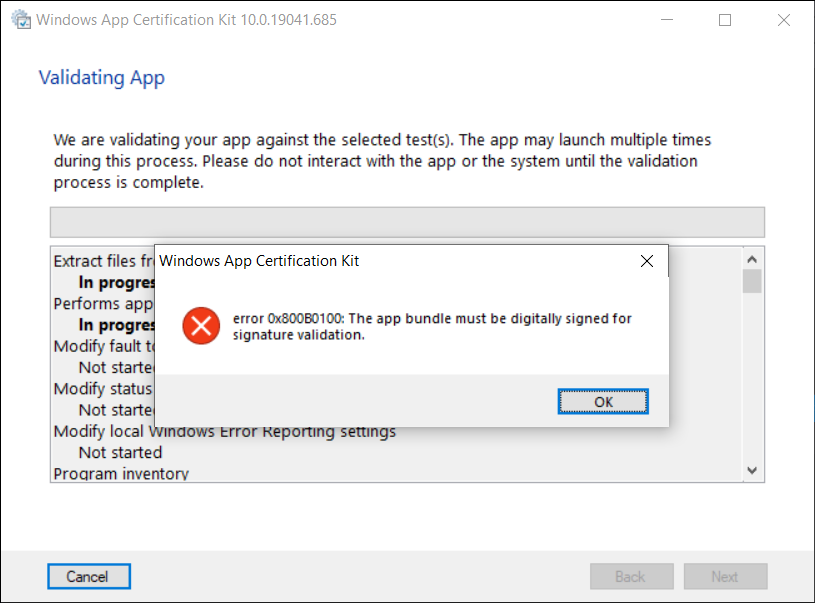

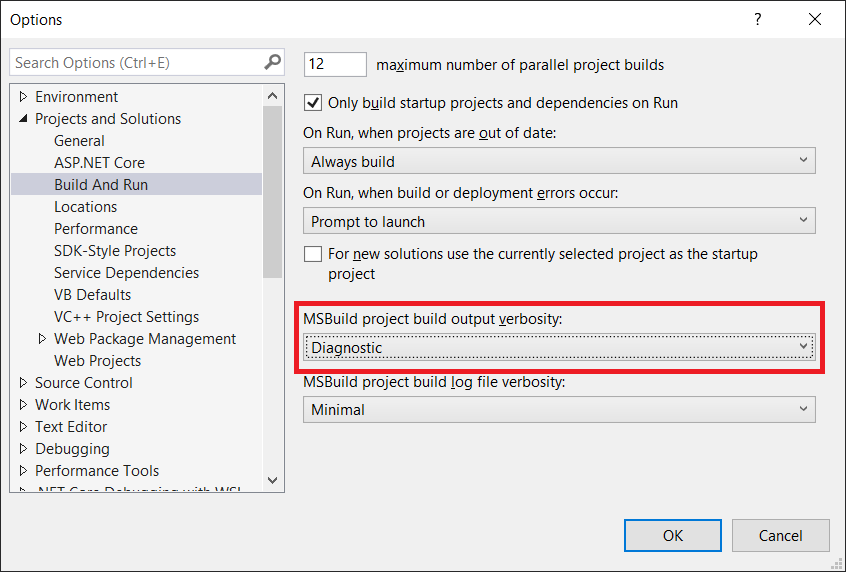

The paradox of the senior cloud software engineer is that the very map that got us here, i.e. the structured curriculum and the certification path, is insufficient to guide us to the next level. The renewal assessment results for Microsoft Certified: Azure Developer Associate I received was a perfect map of the existing territory. However, an architect’s job is not to be a master of the known world. It is to be a cartographer of the unknown. The report correctly identified that I need to master Azure specific trade-offs, like choosing ‘Session’ consistency over ‘Strong’ for low-latency scenarios in CosmosDB. The senior engineer learns that rule. The architect must ask a deeper question: “How can I build a model that predicts the precise cost and P99 latency impact of that trade-off for my specific workload, before I write a single line of code?”

About the Results

Let’s make this concrete by looking at the renewal assessment report itself. It was a gift, not because of the score, but because it is a perfect case study in the difference between the Senior Engineer’s path and the Architect’s.

Where the report suggests mastering Azure Cosmos DB five consistency levels, it is prescribing an act of knowledge consumption. The architect’s impulse is to ask a different question entirely: “How can I quantify the trade-off?” I do not just want to know that Session is faster than Strong. I should know, for a given workload, how much faster, at what dollar cost per million requests, and with what measurable impact on data integrity. The architect’s response is to build a model to turn the vendor’s qualitative best practice into a quantitative, predictive economic decision.

This pattern continues with managed services. The report correctly noted my failure to memorise the specific implementation of Azure Container Apps. The path it offers is to better learn the abstraction. The architect’s path is to become professionally paranoid about abstractions. The question is not “What is Container Apps?” but “Why does this abstraction exist, and what are its hidden costs and failure modes?” The architect’s response is to design experiments or simulations to stress-test the abstraction and discover its true operational boundaries, not just to read its documentation.

This is the new mandate for senior engineers in this new world where we keep on listening senior engineers being out of work: We must evolve from being consumers of complexity to being creators of clarity. We must move beyond mastering the vendor’s pre-defined solutions and begin forging our own instruments to see the future.

From Cert to Personal Project

This is why, in parallel to maintaining my certifications, I have embarked on a different kind of professional development. It is a path of deep, first-principles creation. I am building a discrete event simulation engine not as a personal hobby project, but as a way to understand more about the most expensive and unpredictable problems in our industry. My certification proves I can solve problems the “Azure way.” This new work is about discovering the the fundamental truths that govern all cloud platforms.

Certifications are the foundation. They are the bedrock of our shared knowledge. However, they are not the lighthouse. In this new era, we must be both.

Certifications are an essential foundation. They represent the bedrock of our shared professional knowledge and a commitment to maintaining a common standard of excellence. However they are not, by themselves, the final destination.

Therefore, my next major “proof-of-work” will not be another certificate. It will be the first in a series of public, data-driven case studies derived from my personal project.

Ultimately, a certificate proves that we are qualified and contributing members of our professional ecosystem. This next body of work is intended to prove something more than that. We need to actively solve the complex, high-impact problems that challenge our industry. In this new era, demonstrating both our foundational knowledge and our capacity to create new value is no longer an aspiration. Instead, it is the new standard.

Together, we learn better.