The Internet of Things (IoT) is here today, and it begins with the data, devices, and services already at work in your organization. When your “things” are connected to each other and to the cloud, you create new ways to improve efficiency, enable innovation, and transform your business.

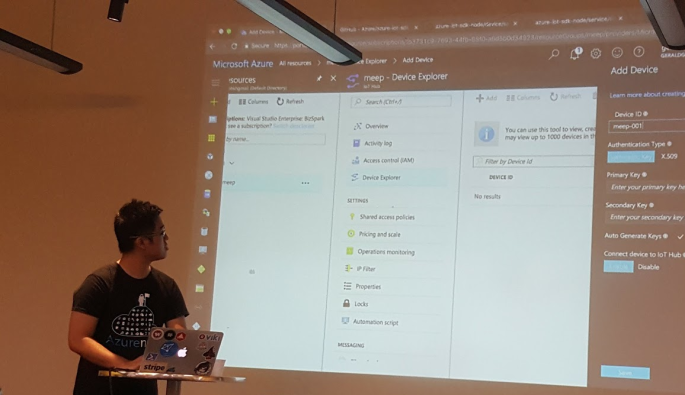

This line is printed on the front page of a Microsoft booklet distributed during the lunchtime workshop “Connecting and Building the Internet of Things (IoT)” conducted by Gerald Goh, Microsoft Technical Evangelist. Gerald shared with us technologies such as AMQP, MQTT, Message Broker in Azure, Device Explorer, and so on.

IoT hasn’t gone totally mainstream, however, and we have yet to feel its impact. In many ways it is roughly where the big data movement was few years ago — consisting mainly of a buzzword that’s not yet widely understood.

Nevertheless, Gerald’s workshop does give me, a web developer who doesn’t know much about this field, a helpful quick start about IoT. After reading and experimenting, I learn more about the capability of Microsoft Azure in IoT and thus I’d like to share with you about what I’ve learnt so far about Azure IoT Hub.

Message Broker

I’m working in Changi Airport. In the airport, we have several shops serving the travelers and visitors. Most of the shops have a back-end system that integrates several systems such as the retail system, e-commerce website, payment system, Changi Rewards system, inventory management system, the finance system.

So there will be cases where, when a customer buys something at the shop, the retail system needs to send as request to the payment system. Then when the purchase is successful, another purchase request will be sent to the inventory management system and the finance system.

I’m not too sure how the shops link different systems, especially this kind of point-to-point integration will cause a large number of connections among the systems. Hence, the developers of their system may find Message Broker useful.

Message Broker is a physical component that handles the communication between systems. A system sends a message to the message broker, providing the logical name of the receiving systems. The message broker will then search for the receiving systems and then passes the message to them.

Messaging Protocols: AMQP and MQTT

Sending a message between systems seems to be an easy task, however, doing it in a reliable and secure manner can be a challenging work.

As shown in the article “Scalable Eventing over Mesos!”, Autodesk is using AMQP (Advanced Message Queuing Protocol) as messaging protocols between two parties with the following main characteristics as goals.

- Security

- Reliability

- Interoperability

- Standard

- Open

AMQP 1.0 is the current specification version. It is also the primary protocol of Azure Event Hubs and Azure Service Bus Messaging after the SBMP (Service Bus Messaging Protocol), the TCP-based protocol which is used inside of .NET client library, is phased out.

Besides AMQP, MQTT (Message Queue Telemetry Transport) is another open protocol based on TCP/IP for asynchronous message queuing which has been developed and matured over past few years.

While AMQP is designed to provide the full vibrancy of messaging scenarios, MQTT is designed as an extremely lightweight publish/subscribe message transport for small and simple devices sending small messages on low-bandwidth networks. Hence, MQTT is said to be ideal for mobile applications because of its low power usage and minimized data packets.

MQTT is also simple because it just has five API methods:

- Connect to an MQTT broker;

- Disconnect from an MQTT broker;

- Subscribe to an MQTT topic filter;

- Unsubscribe from an MQTT topic filter;

- Publish MQTT messages.

If you are interested to know more about the comparison of AMQP and MQTT, there is a detailed white paper from StormMQ discussing the difference between AMQP and MQTT.

Brokered Messaging – Service Bus Messaging

When two or more systems want to exchange information, they need a communication facilitator. This is where Microsoft Azure Service Bus comes into picture.

One of the messaging patterns offered in Azure Service Bus is called Service Bus Messaging, or Brokered Messaging. By using it, both senders and receivers do not have to be available at the exact same time.

AMQP 1.0 support is available in the Service Bus SDK since its version 2.1. Since the Service Bus .NET client library by default using a dedicated SOAP-based protocol, to use AMQP 1.0, we need to specify in the Service Bus Connection String as highlighted below in bold.

<?xml version="1.0" encoding="utf-8" ?>

<configuration>

<appSettings>

<add

key="Microsoft.ServiceBus.ConnectionString"

value="Endpoint=sb://[namespace].servicebus.windows.net/;SharedAccessKeyName=RootManageSharedAccessKey;SharedAccessKey=[SAS key];TransportType=Amqp" />

appSettings>

configuration>

In AMQP transport mode, the client library of sender will serialize the brokered message into an AMQP message so that the message can be received and interpreted by a receiver running on a different platform.

Azure Event Hub

When our event-based messaging needs to be handled at a very huge scale, we can either continue to pay even more to use Azure Service Bus or we can switch to use Event Hub. Event Hub is a cheaper way for us to be able to deal with huge bursts of messages and retain messages for a longer period of time.

Although Event Hub does not support MQTT, it does support AMQP (and HTTP) where there could be at most 5,000 concurrent AMQP connections.

Event Hubs event and telemetry handling capabilities, such as ingesting millions of events per second, make it especially usefu for IoT scenarios. However, since it is ingestion only thus Event Hub has no facility for sending traffic, for example, from the cloud back to the devices (C2D).

Azure IoT Hub

Since Event Hubs only enable event ingress, i.e. C2D, Azure offers another service, IoT Hub, for both C2D and D2C (Device-to-Cloud) communications which are reliable and secure. Not only allowing bi-directional communication, IoT Hub also supports AMQP, HTTP, and MQTT.

IoT Hub has an identity registry storing information about devices which are given the permission to connect to the IoT Hub. Before a device can connect to an IoT Hub, there must be an entry for that device in the identity registry of the IoT Hub.

In a Hello World tutorial of connecting stimulated device to IoT Hub using C#, there is a way to add device and retrieve device identity programmatically as shown below.

private static async Task AddDeviceAsync()

{

string deviceId = "gclRasPi2";

Device device;

try

{

device = await registryManager.AddDeviceAsync(new Device(deviceId));

}

catch (DeviceAlreadyExistsException)

{

device = await registryManager.GetDeviceAsync(deviceId);

}

Console.WriteLine("Generated device key: {0}", device.Authentication.SymmetricKey.PrimaryKey);

}

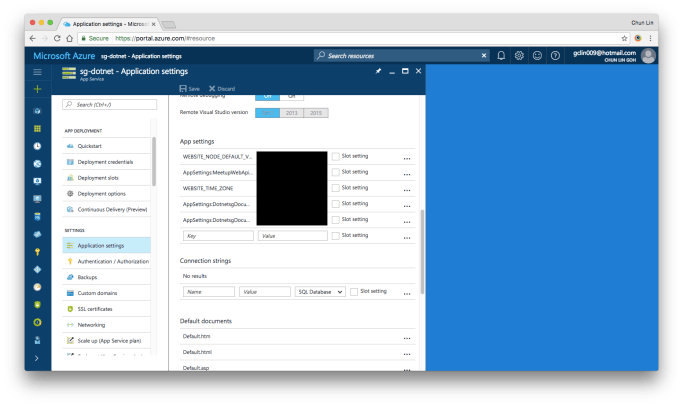

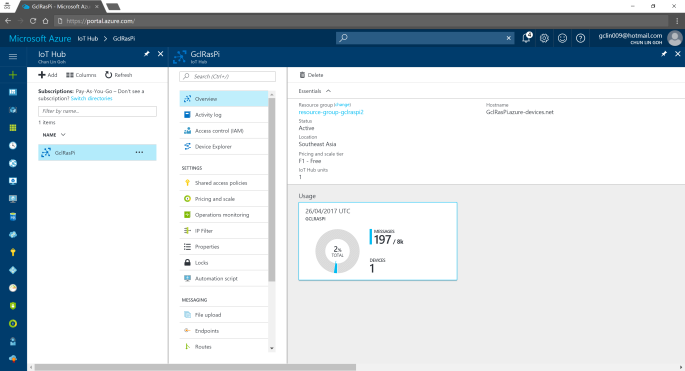

The Registry Manager, which is connecting to the IoT Hub using a Connection String with proper Policy, will add an device identity with the Device ID “gclRasPi2” to the Device Explorer in Azure.

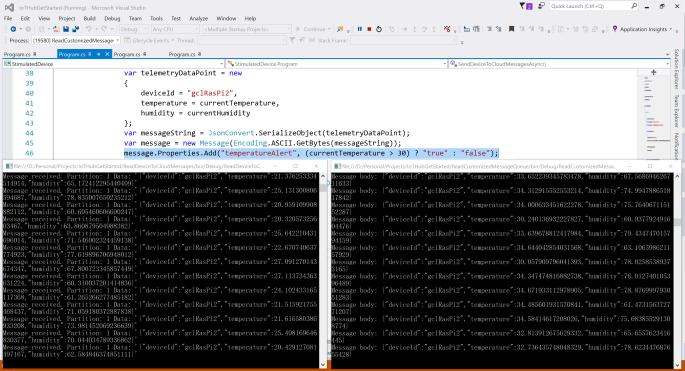

After doing so, a message then can be sent from (stimulated) device to the IoT Hub. For example, the device wants to send data about the temperature and humidity at that moment using MQTT, we can use the following code.

var deviceClient = DeviceClient.Create(

iotHubUri,

new DeviceAuthenticationWithRegistrySymmetricKey("gclRasPi2", deviceKey),

TransportType.Mqtt);

var telemetryDataPoint = new

{

deviceId = "gclRasPi2",

temperature = currentTemperature,

humidity = currentHumidity

};

var messageString = JsonConvert.SerializeObject(telemetryDataPoint);

var message = new Message(Encoding.ASCII.GetBytes(messageString));

message.Properties.Add("temperatureAlert", (currentTemperature > 30) ? "true" : "false");

await deviceClient.SendEventAsync(message);

To read the message, please follow the steps shared by the tutorial on setting up to read data-point messages.

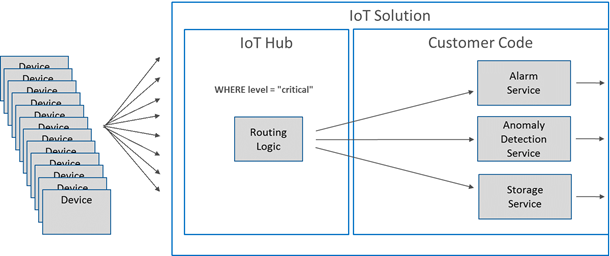

Message Routing

Besides reading normal data-point messages, what really interests me is another tutorial about message processing with Message Routing.

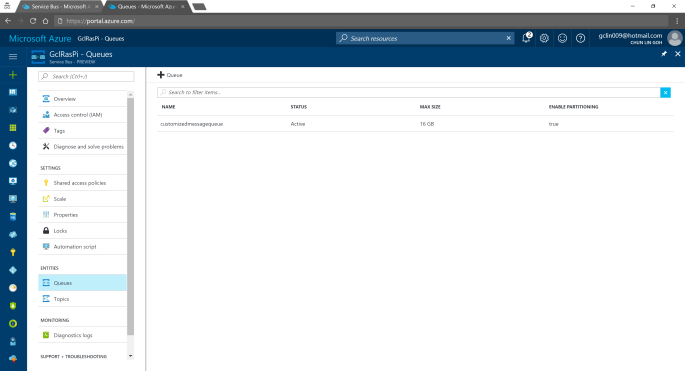

According to the tutorial, we first need to setup a Service Bus queue in the same Azure subscription and region as our IoT Hub.

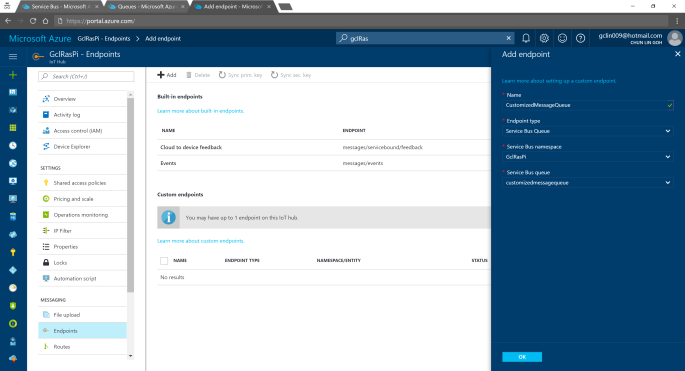

We can then add an Endpoint in the IoT Hub for the queue we just created. As shown in the following screenshot, there is a message saying that “You may have up to 1 endpoint on the IoT hub.” This is because I am using the free IoT Hub. For its paid versions, only at most 10 custom endpoints are allowed.

Interestingly, each Azure subscription can only have at most 10 IoT Hubs, and only 1 free IoT Hub.

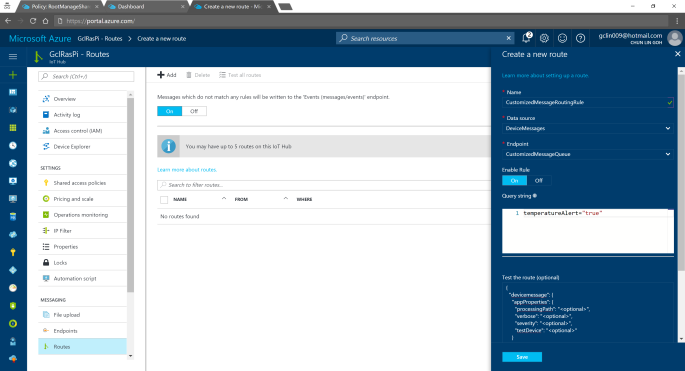

After adding endpoint, we need to setup the Message Routing. For free version, we can only have 5 routing rules.

In the query string, I used temperatureAlert = “true” as the condition. Also, as shown on the screenshot above, there is a line saying “Messages which do not match any rules will be written to the ‘Events (messages/events)’ endpoint.” Hence, the following two console applications will show different results: The left one is connecting to the messages/events endpoint while the right one is showing messages that match the CustomizedMessageRoutingRule created above.

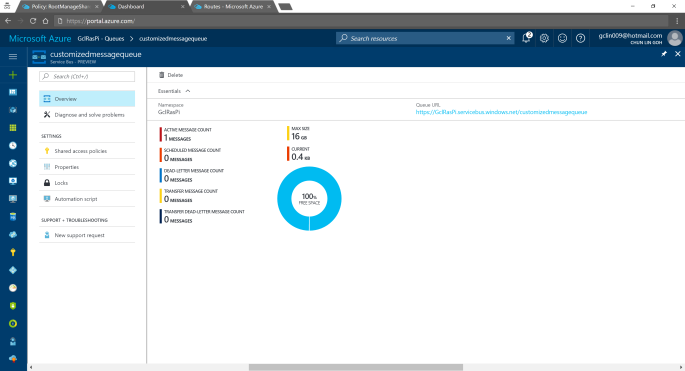

Now if we visit the Service Bus Queue page and IoT Hub page again, we will see some updates on the numbers.

Conclusion

That’s all about my first try of Azure IoT Hub after attending the workshop delivered by Gerald. It’s a great lunchtime workshop.

For those who are interested, there is an article on Microsoft sharing the benefits of using Azure IoT Hub service, you can read it to understand more.

This is just the beginning of my IoT learning journey. There are still more things for me to learn, such as Azure Stream Analysis and Microsoft Azure IoT Suite which is briefly brought up in the booklet mentioned above.

If you spot any mistake in this article or you have more to talk about IoT and in particular IoT in Azure ecosystem, please share with me. =)