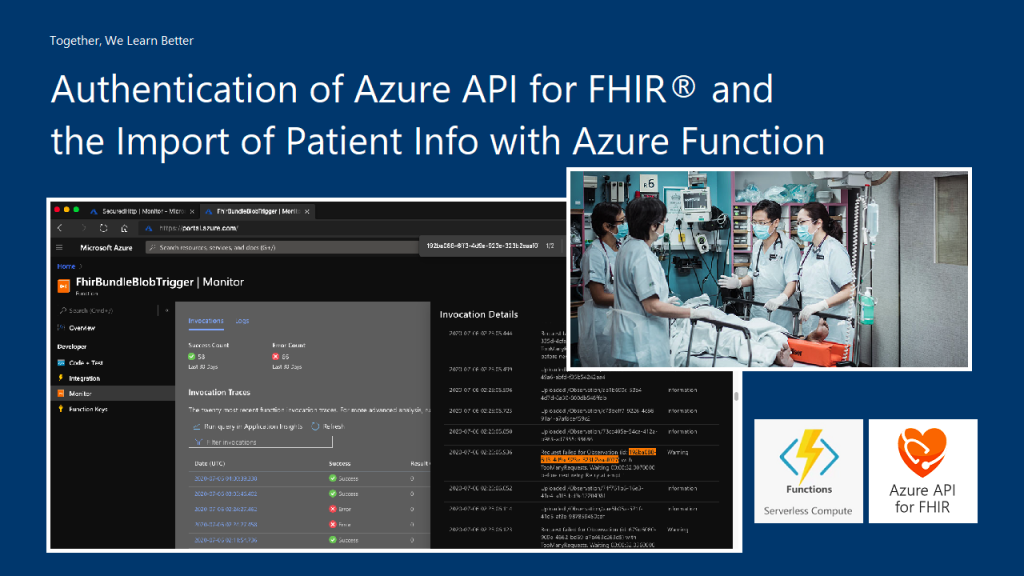

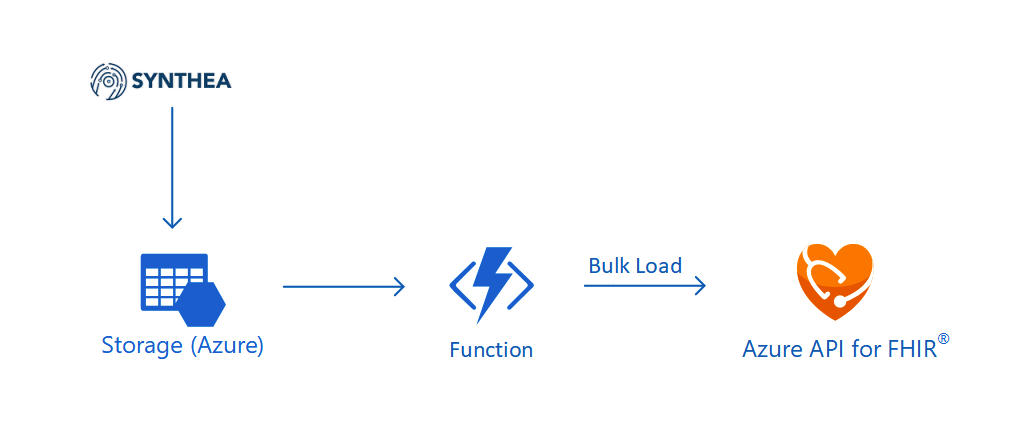

In our previous article, we have successfully imported realistic but not real patient data into the database in our Azure API for FHIR. Hence, the next step we would like to go through in this article is how to write a user-friendly dashboard to show those healthcare data.

For frontend, there are currently many open-source web frontend frameworks for us to choose from. For example, in our earlier project of doing COVID-19 dashboard, we used the Material Design from Google.

In this project, in order to make our healthcare dashboard to be consistent with other Microsoft web apps, we will be following Microsoft design system.

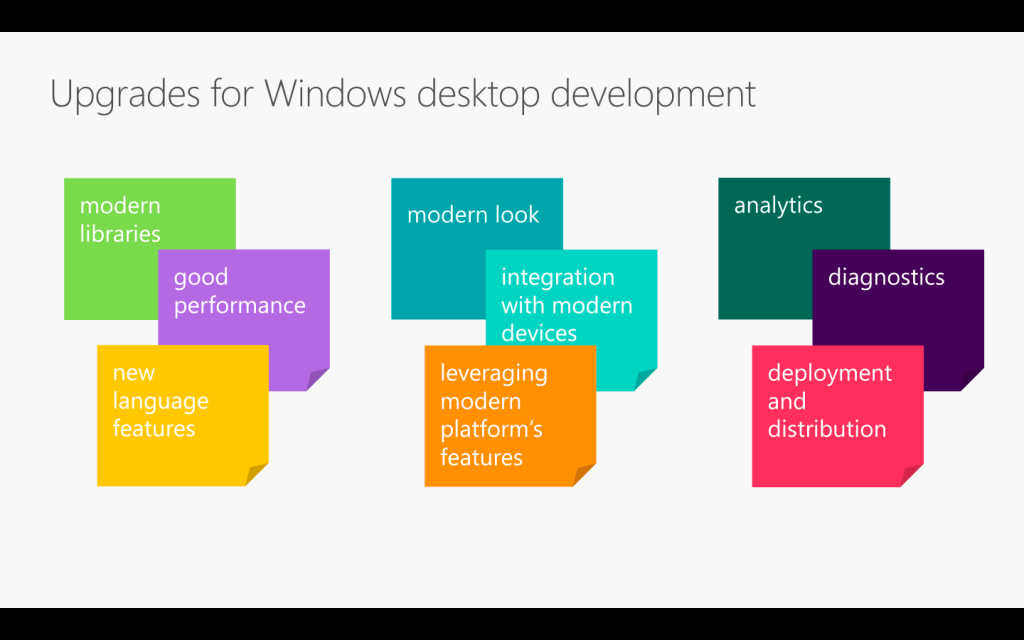

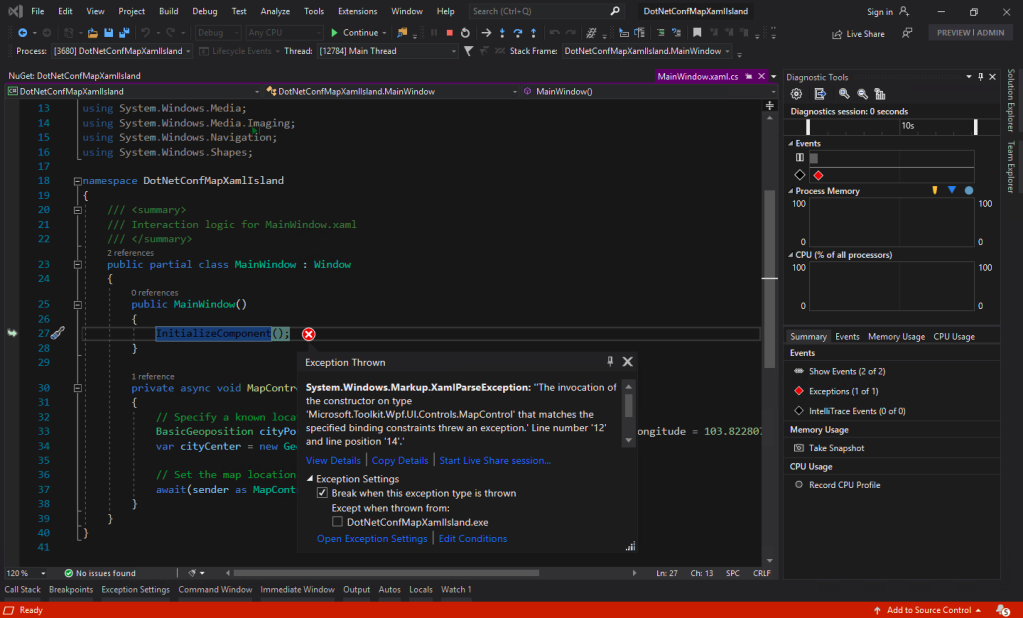

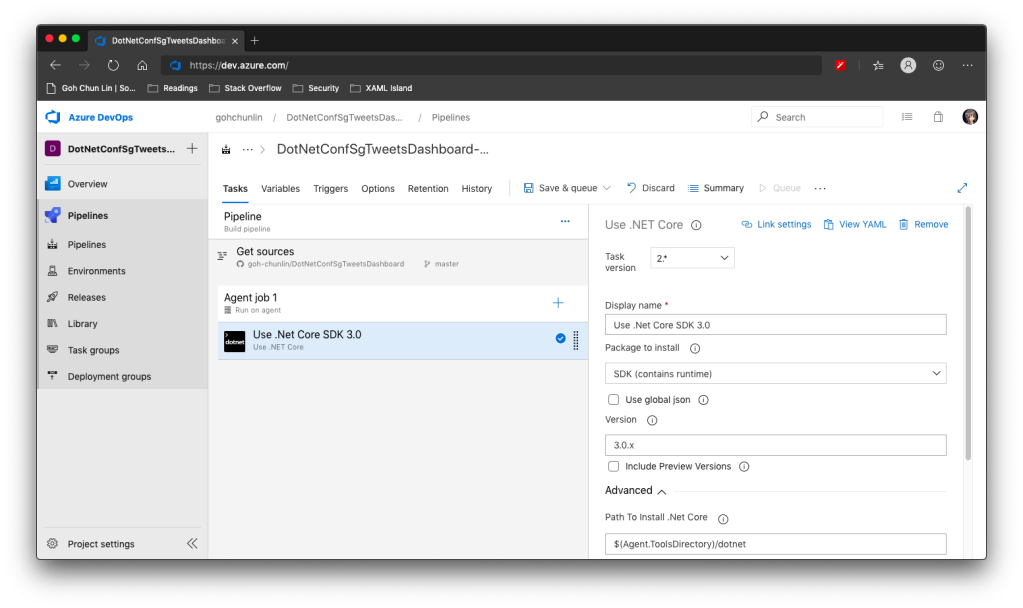

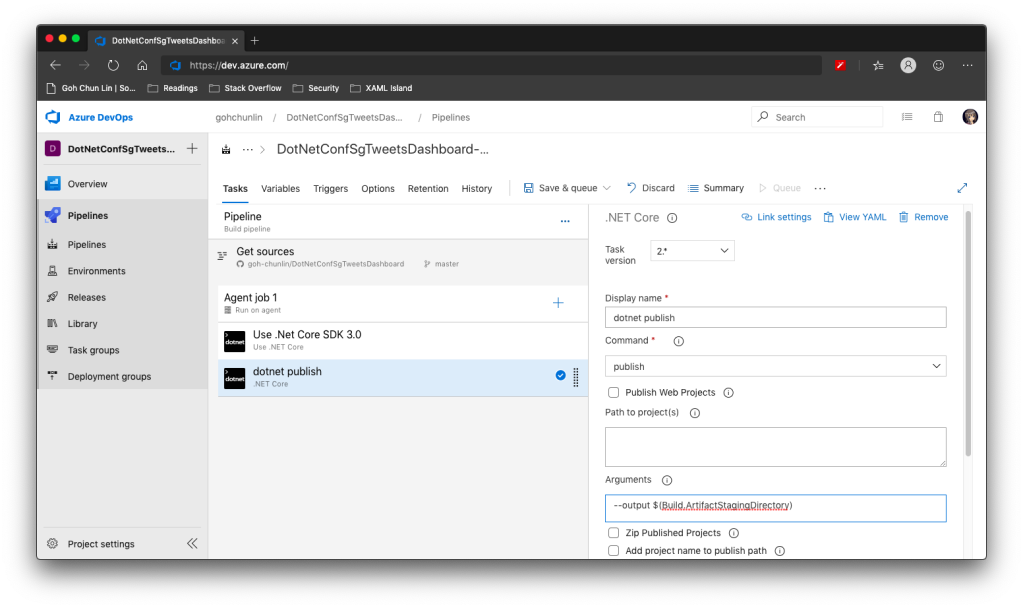

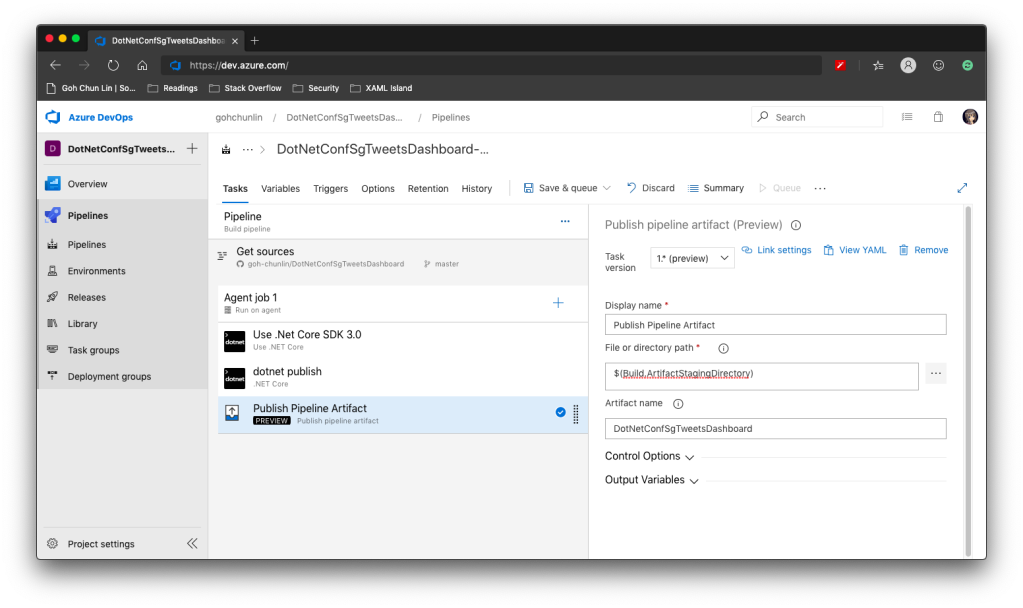

For the backend of the dashboard, we will be using ASP .NET Core 3.1 because even though Michael Hansen provided a sample on GitHub about how to write client app to consume Azure API for FHIR, it is a JavaScript app. So I think another sample on how to do it with ASP .NET Core will be helpful to other developers as well.

FAST Framework and Web Components

Last week on 7th July, Rob Eisenberg from Microsoft introduced the FAST Framework during the .NET Community Standup. The FAST Framework, where FAST stands for Functional, Adaptive, Secure, and Timeless, is an adaptive interface system for modern web experiences.

In web app development projects, we always come to situations where we need to add a button, a dropdown, a checkbox to our web apps. If we are working in a large team, then issues like UI consistency across different web apps which might be built using different frontend frameworks are problems that we need to solve. So what FAST Framework excites me is solving the problem with Web Components that can be used with any different frontend frameworks.

All modern browsers now support Web Components. The term Web Components basically refers to a suite of different technologies allowing us to create reusable custom elements — with their functionality encapsulated away from the rest of our code — and utilise them in our web apps. Hence, using Web Components in our web app increases reusability, testability, and reliability in our codes.

Web Components can be integrated well with major frontend frameworks, such as Angular, Blazor, Vue, etc. We can drop Web Components easily to ASP .NET web projects too and we are going to do that in our healthcare dashboard project.

FAST Design System Provider

Another cool thing in FAST Framework is that it comes with a component known as the Design System Provider which provides us a convenient mechanisms to surface the design system values to UI components and change values where desired.

In the FAST Framework, the Web Component that corresponds to the design system provider is called the FASTDesignSystemProvider. Its design system properties can be easily overridden by just setting the value of the corresponding property in the provider. For example, by simply changing the background of the FASTDesignSystemProvider from light to dark, it will automatically switch from the light mode to the dark mode where corresponding colour scheme will be applied.

UI Fabric and Fluent UI Core

In August 2015, Microsoft released the GA of Office UI Fabric on GitHub. The goal of having Office UI Fabric is to provide the frontend developers a mobile-first responsive frontend framework, similar like Bootstrap, to create the web experiences.

The Office UI Fabric speaks the Office Design Language. As long as you use any Office-powered web app, such as Outlook or OneDrive, the Office web layout should be very familiar to you. So by using the Office UI Fabric, we can easily make our web apps to have Office-like user interface and user experience.

In order to deliver a more coherent and productive experience, Microsoft later released Fluent Framework, another cross-platform design system. Also, to move towards the goal of simplified developer ecosystem, Office UI Fabric later evolved into Fluent UI as well in March 2020.

Fluent UI can be used in both web and mobile apps. For web platform, it comes with two options, i.e. Fabric UI React and Fabric Core.

Fabric UI React is meant for React application while Fabric Core is provided primarily for non-React web apps or static web pages. Since our healthcare dashboard will be built on top of ASP .NET Core 3.1, Fabric Core is sufficient in our project.

However, due to some components, such as ms-Navbar and ms-Table, are still only available in Office UI Fabric but not the Fabric Core, our healthcare dashboard will use both the CSS libraries.

Azure CDN

A CDN (Content Delivery Network) is a distributed network of servers that work together to provide fast delivery of the Internet content. Normally, they are distributed across the globe so that the content can be accessed by the users based on their geographic locations so that users around the world can view the same high-quality content without slow loading time. Hence, it is normally recommended to use CDN to serve all our static files.

Another reason of us not to host static files in our web servers is that we would like to avoid extra HTTP requests to our web servers just to load only the static files such as images and CSS.

Fortunately, Azure has a service called Azure CDN which will be able to offer us a global CDN to store cached content on edge servers in the point-of-presence locations that are close to end users, to minimise latency.

To use Azure CDN, firstly, we need to store all the necessary static files in the container of our Storage account. We will be using back the same Storage account that we are using to store the realistic but not real patient data generated by Synthea(TM).

Secondly, we proceed to create Azure CDN.

Thirdly, we add an endpoint to the Azure CDN, as shown in the following screenshot, to point to the container that stores all our static files.

Finally, we can access the static files with the Azure CDN endpoint. For example, to get the Office Fabric UI css, we will use the following URL.

https://lunar-website-statics.azureedge.net/fabric.min.css

There is already a very clear quick-start tutorial on Microsoft Docs that you can refer to if you are interested to find out more about the integration of Azure CDN with Azure Storage.

Options Pattern in ASP .NET Core

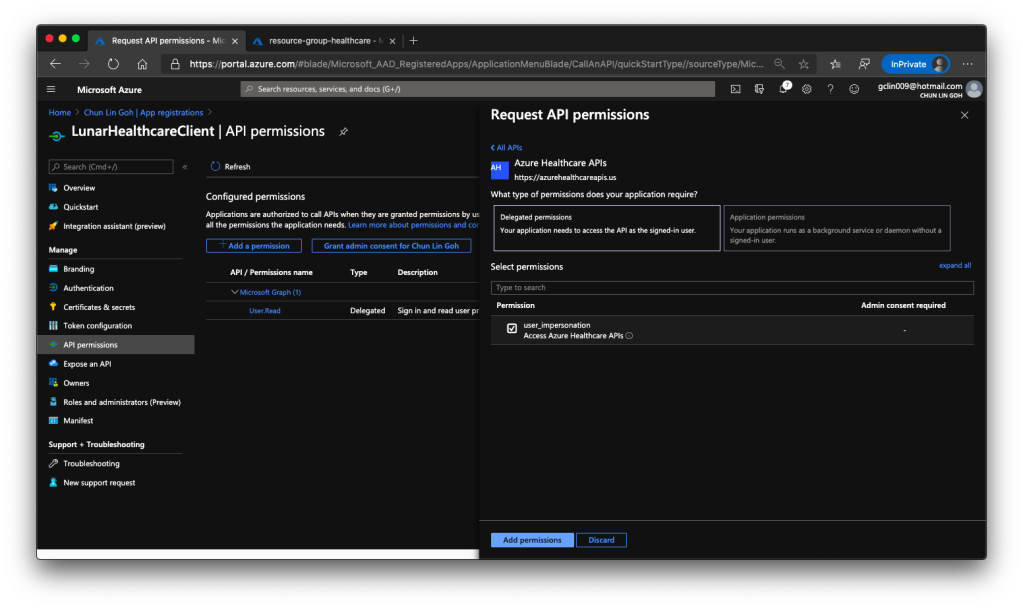

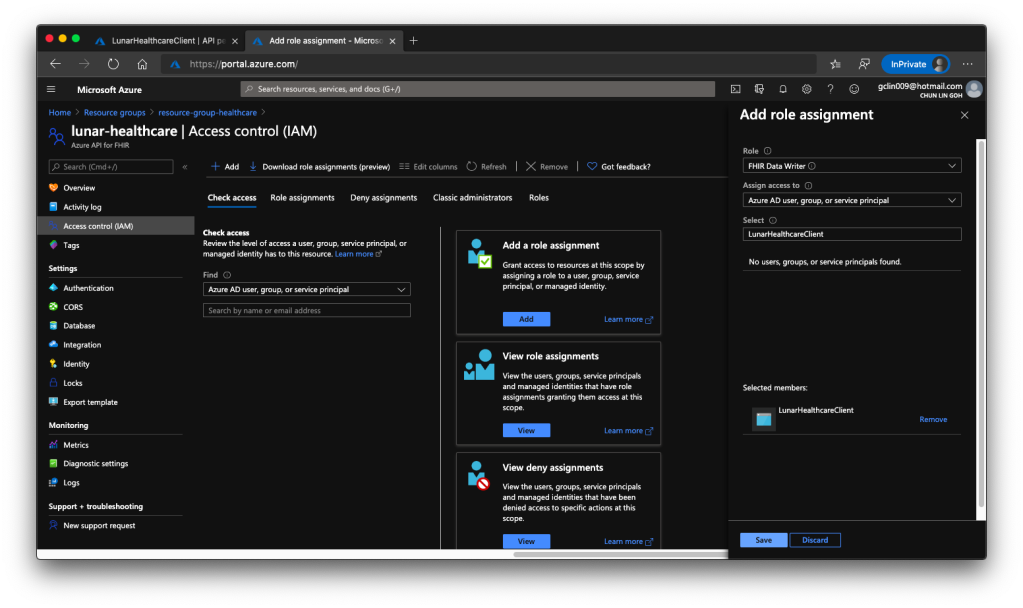

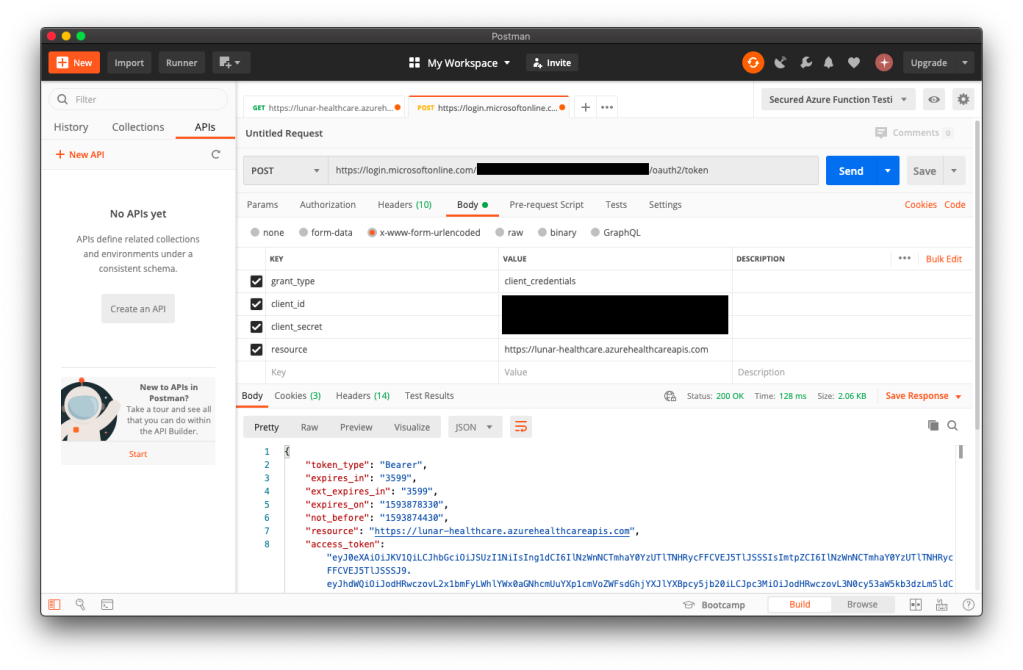

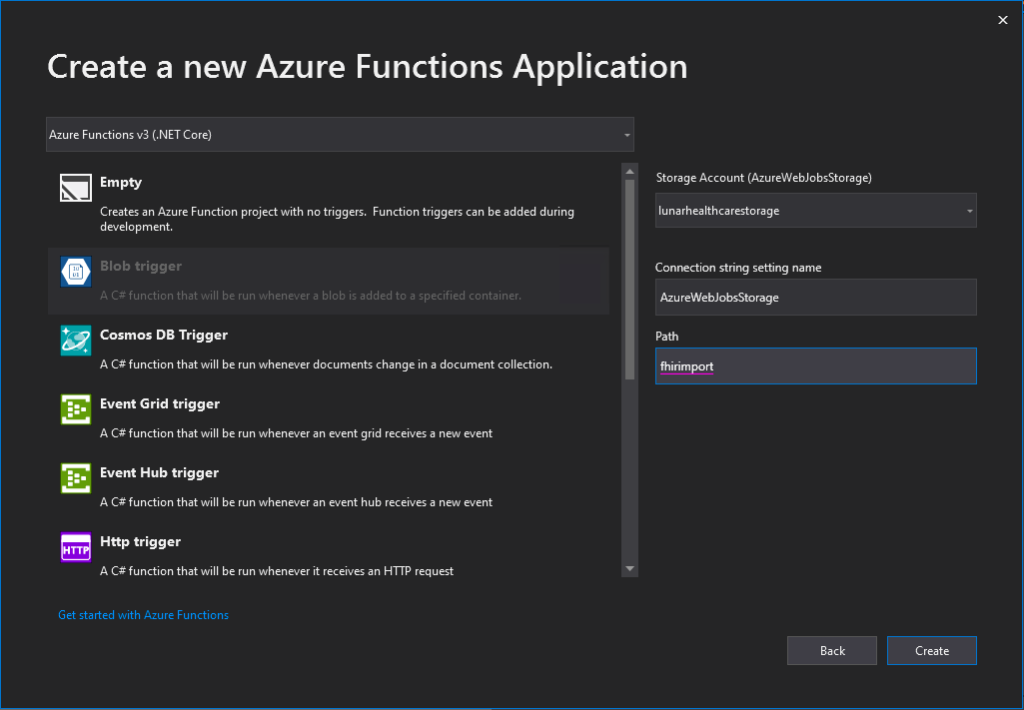

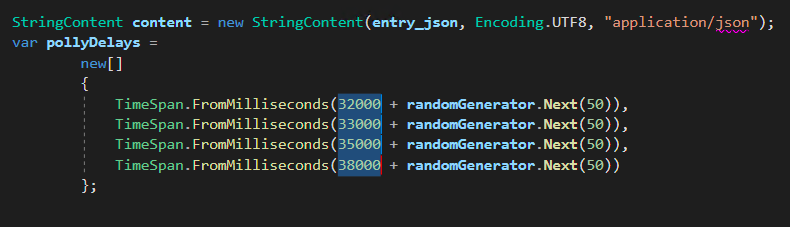

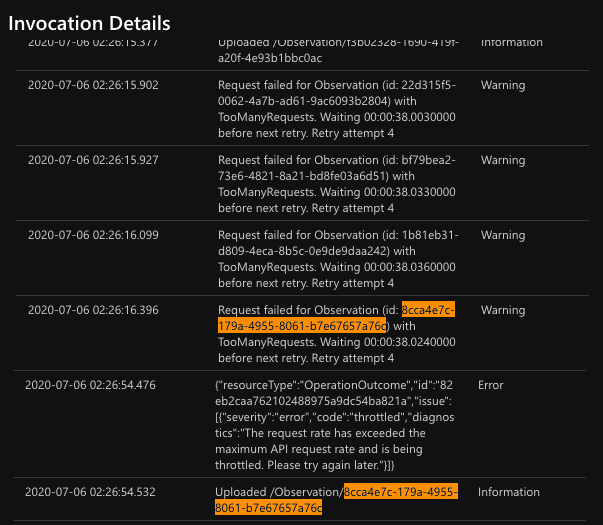

Similar as the Azure Function we deployed in the previous article, we will send GET request to different endpoints in the Azure API for FHIR to request for different resources. However, before we are able to do that, we need to get Access Token from the Azure Active Directory first. The steps to do so have been summarised in the same previous article as well.

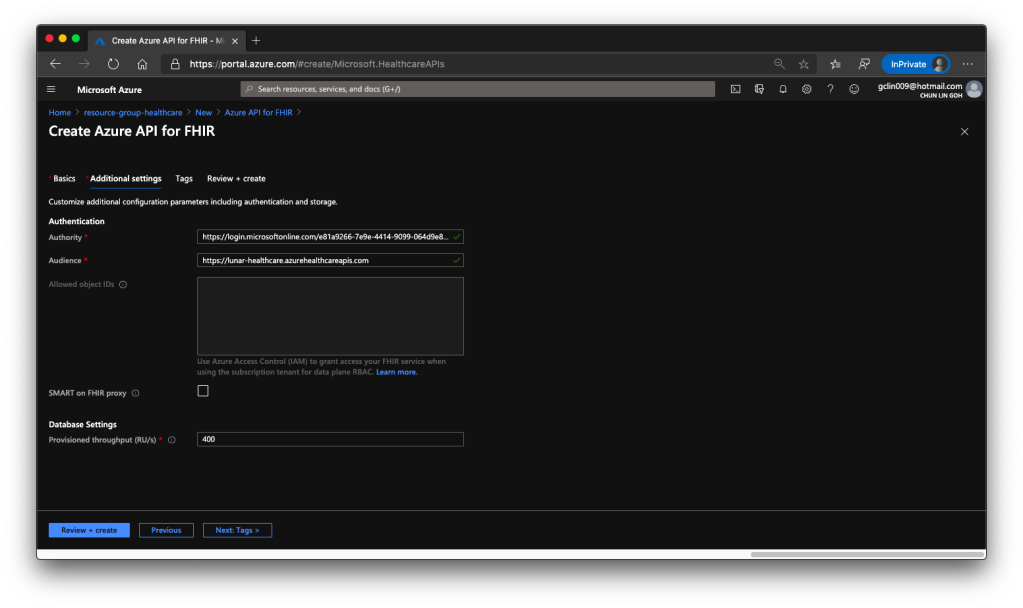

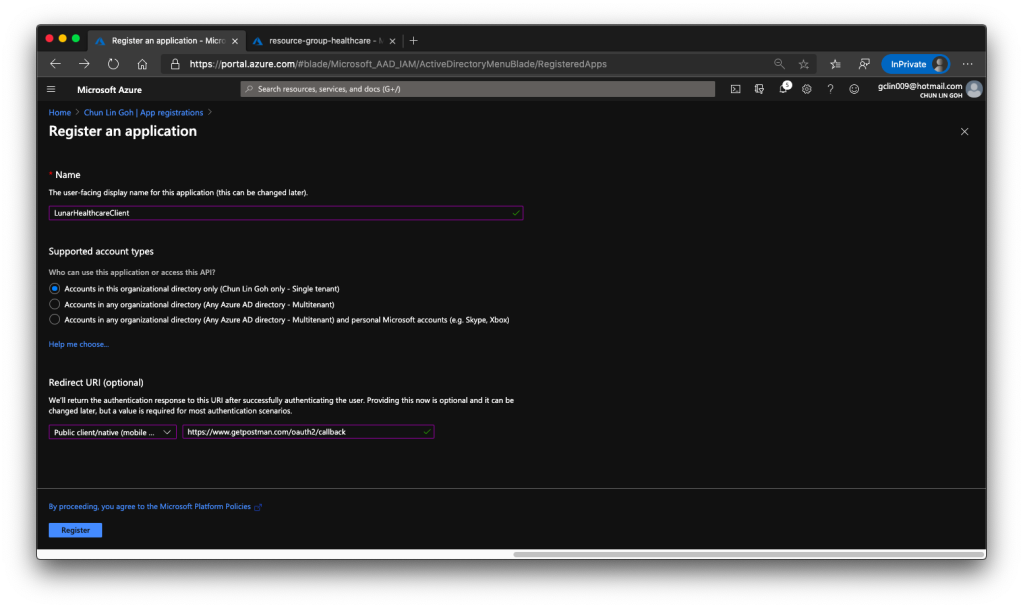

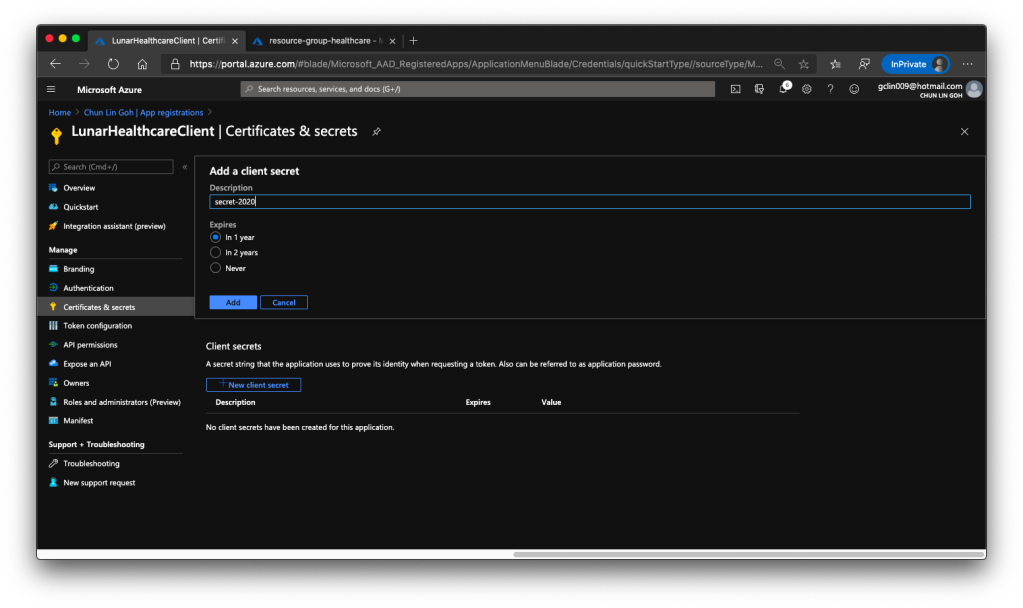

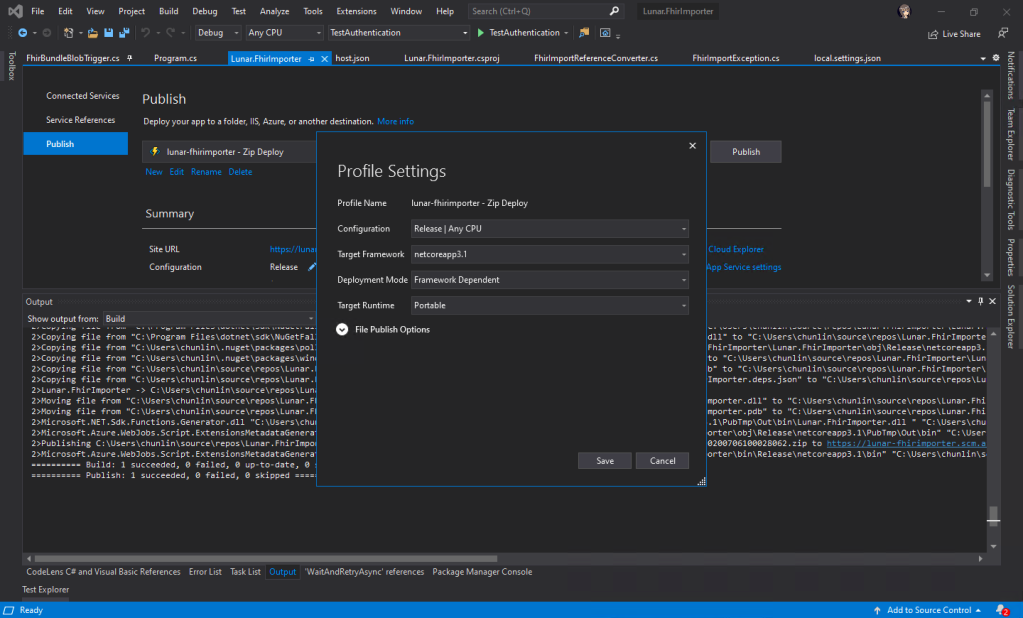

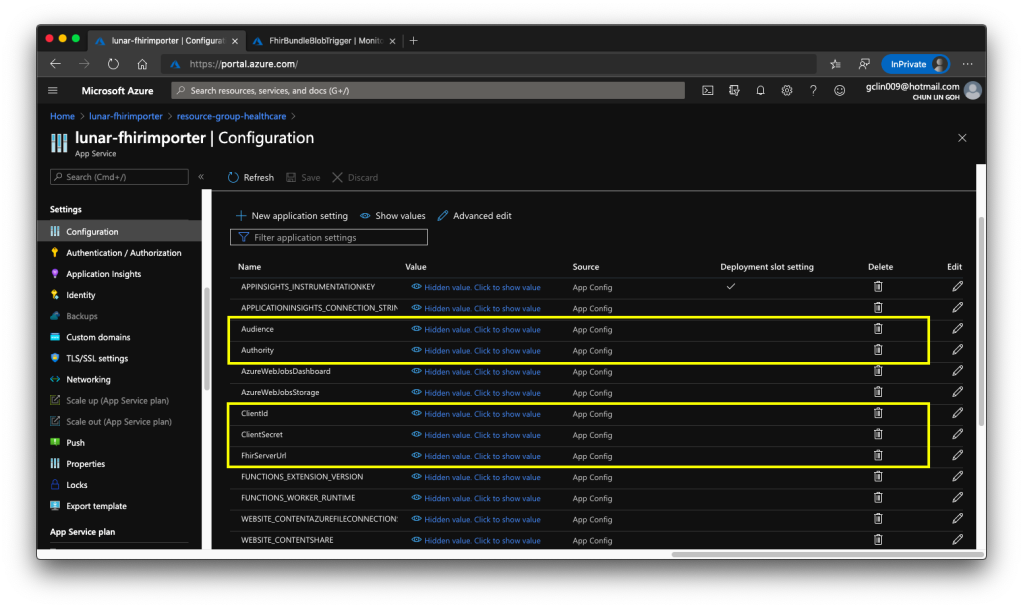

Since we need application settings such as Authority, Audience, Client ID, and Client Secret to retrieve the access token, we will store them in appsettings.Development.json for local debugging purpose. When we later deploy the dashboard web app to Azure Web App, we will store the settings in the Application Settings.

Then instead of reading from the setting file directly using the IConfiguration, here we would choose to use Options pattern which enable us to provide strongly typed access to groups of related settings and thus provide also a mechanism to validate the configuration data. For example, the settings we have in our appsettings.Development.json is as follows.

{

"Logging": {

...

},

"AzureApiForFhirSetting": {

"Authority": "...",

"Audience": "...",

"ClientId": "...",

"ClientSecret": "..."

},

...

}

We will then create a class which will be used to bind to the AzureApiForFhirSettings.

public class AzureApiForFhirSetting {

public string Authority { get; set; }

public string Audience { get; set; }

public string ClientId { get; set; }

public string ClientSecret { get; set; }

}

Finally, to setup the binding, we will need to add the following line in Startup.cs.

// This method gets called by the runtime. Use this method to add services to the container.

public void ConfigureServices(IServiceCollection services)

{

services.AddOptions();

services.Configure<AzureApiForFhirSetting>(Configuration.GetSection("AzureApiForFhirSetting"));

...

services.AddControllersWithViews();

}

After that, we will apply dependency injection of IOption to the classes that we need to use the configuration, as shown in the following example.

public class AzureApiForFhirService : IAzureApiForFhirService

{

private readonly AzureApiForFhirSetting _azureApiForFhirSetting;

public AzureApiForFhirService(IOptions<AzureApiForFhirSetting> azureApiForFhirSettingAccessor)

{

_azureApiForFhirSetting = azureApiForFhirSettingAccessor.Value;

}

...

}

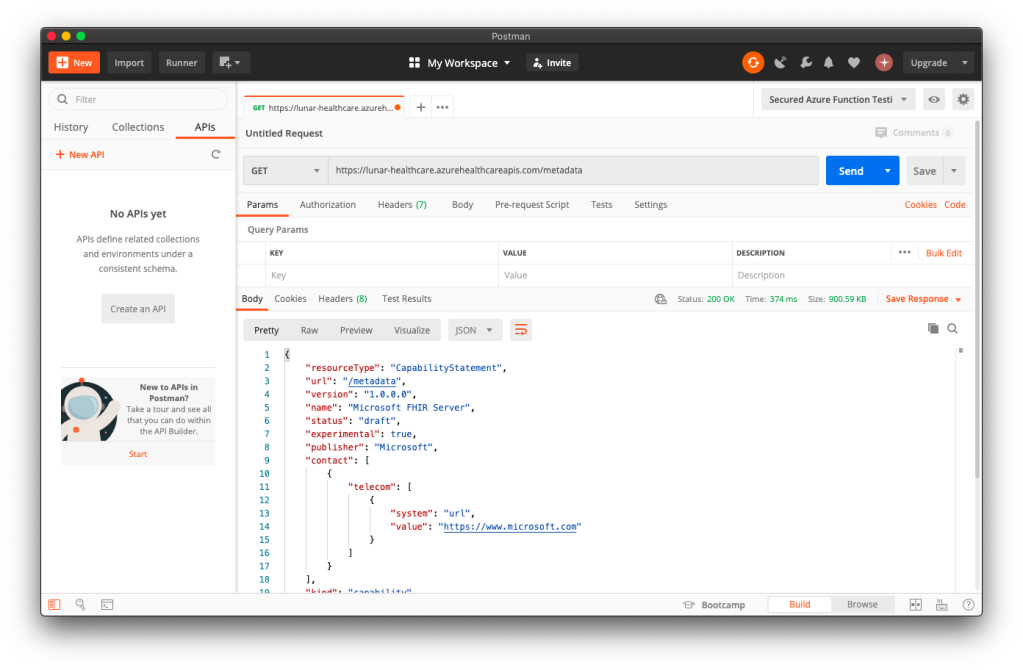

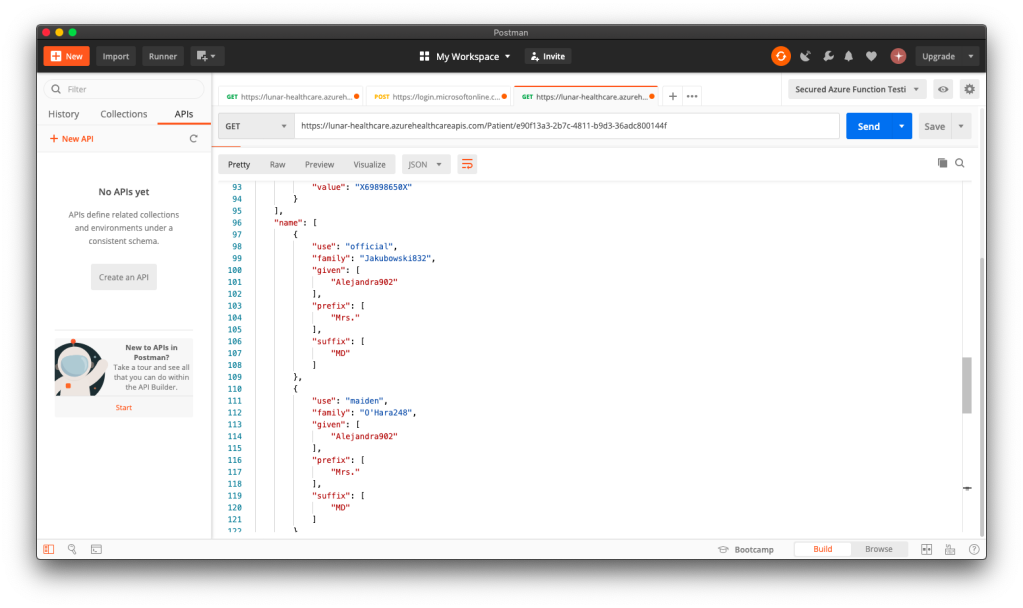

Once we can get the access token, we will be able to access the Azure API for FHIR. Let’s see some of the endpoints the API has.

Azure API for FHIR: The Patient Endpoint

To retrieve all the patients in the database, it’s very easy. We simply need to send a GET request to the /patient endpoint. By default, the number of records returned by the API is 10 at most. To retrieve the next 10 records, we need to send another GET request to another URL link returned by the API, as highlighted in the red box in the following example screenshot.

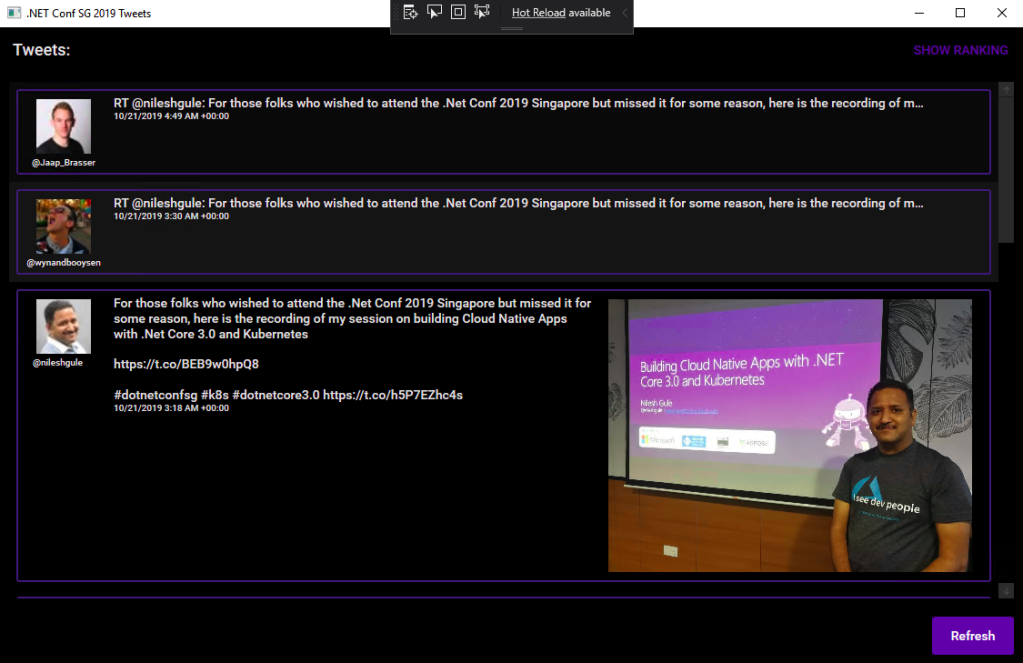

Once we have all the patients, we then can list them out in a nice table designed with Office UI Fabric, as shown in the following screenshot.

When we click on the link “View Profile” of a record, we then can get more details about the selected patient. To retrieve the info of a particular patient, we need to pass the ID to the /patient endpoint, as shown in the following screenshot, which is highlighted in a red box.

Where can we get the patient’s ID? The ID is returned, for example, when we get the list of all patients.

So after we click on the “View Profile”, we will then be able to reach a Patient page which shows more details about the selected patient, as shown in the following screenshot.

Azure API for FHIR: The Other Endpoints

There are many resources available in the Azure API for FHIR. Patient is one of them. Besides, we also have Condition, Encounter, Observation, and so on.

To get entries from the endpoints corresponding to the three resources listed above is quite straightforward. However, if we directly send a GET request to, let’s say, /condition, what we will get is all the Condition records of all the patients in the database.

In order to filter based on the patient, we need to add a query string called patient to the endpoint URL, for example /condition?patient=, and then we append the patient ID to the URL.

Then we will be able to retrieve the resources of that particular patient, as shown in the following screenshot.

So far I have only tried the four endpoints. The /observation endpoint is very tricky because the values that it return can be most of the time a single number for a measurement. However, it will also returns two numbers or text for some other measurement. Hence, I have to do some if-else checks on the returned values from the /observation endpoint.

Source Code of the Dashboard

That’s all for the healthcare dashboard that I have built so far. There are still many exciting features we can expect to see after we have integrated with the Azure API for FHIR.

So, if you would like to check out my codes, the dashboard project is now available on GitHub at https://github.com/goh-chunlin/Lunar.HealthcareDashboard. Feel free to raise issue or PR to the project.

Together, we learn better.